我们以抓取博客内容为例,为大家展示如何操作。

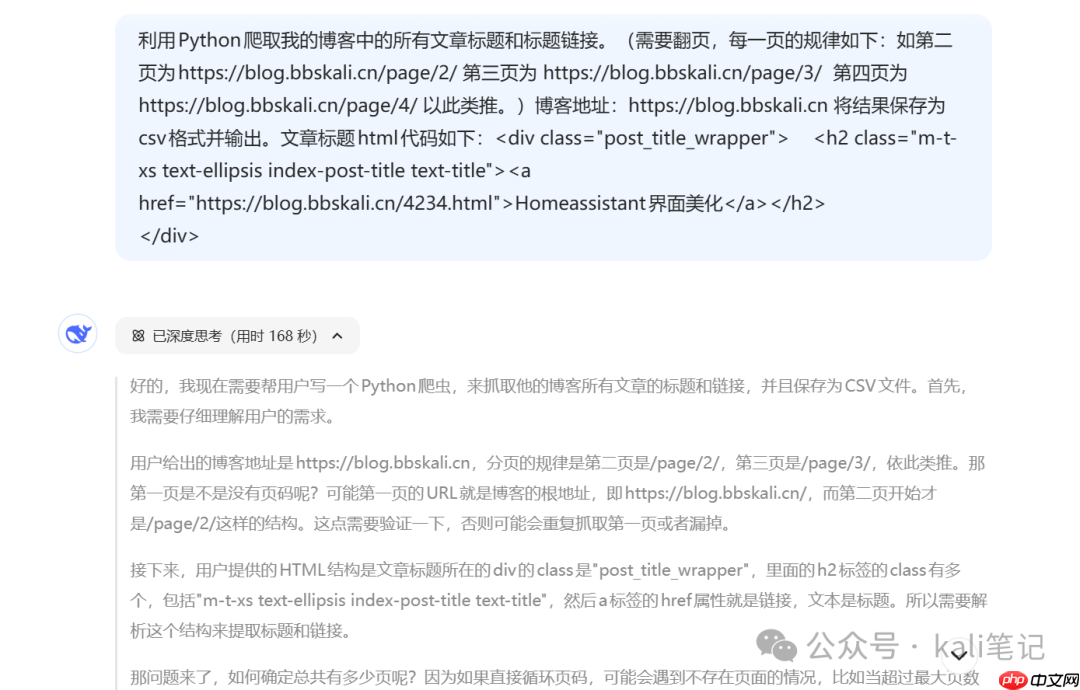

#抓取标题与链接#

使用Python获取我的博客中所有文章的标题及其对应链接。(需翻页处理,各页面URL规律如下:第二页为https://www.php.cn/link/2c2c5fd01b61e3e0e687573af8f7e1fa/page/2/,第三页为 https://www.php.cn/link/649f7e2bf4d7efb62d56f6090cf943eb https://www.php.cn/link/9191b0a3b4c41e6732dbb644bd52d6fc 将最终结果导出为csv文件。文章标题的HTML结构示例如下:

<h2 class="m-t-xs text-ellipsis index-post-title text-title"><a href="https://www.php.cn/link/2c2c5fd01b61e3e0e687573af8f7e1fa/4234.html">Homeassistant界面美化</a></h2>

HAPPY TEACHER'S DAY

狼群淘客系统基于canphp框架进行开发,MVC结构、数据库碎片式缓存机制,使网站支持更大的负载量,结合淘宝开放平台API实现的一个淘宝客购物导航系统采用php+mysql实现,任何人都可以免费下载使用 。狼群淘客的任何代码都是不加密的,你不用担心会有任何写死的PID,不用担心你的劳动成果被窃取。

0

0

可以看到,讲解非常详细。

可以看到,讲解非常详细。

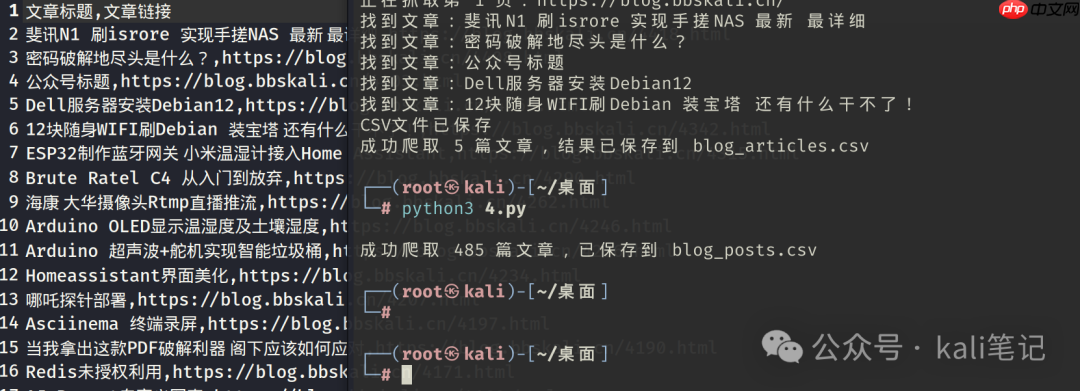

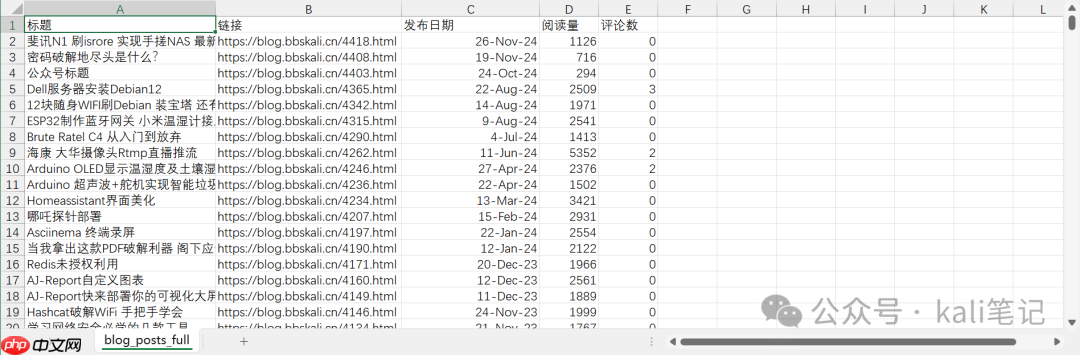

运行生成的代码,效果如下:

接下来,我们尝试更复杂一点的任务:提取每篇文章的阅读次数、评论数量以及发布时间。

接下来,我们尝试更复杂一点的任务:提取每篇文章的阅读次数、评论数量以及发布时间。

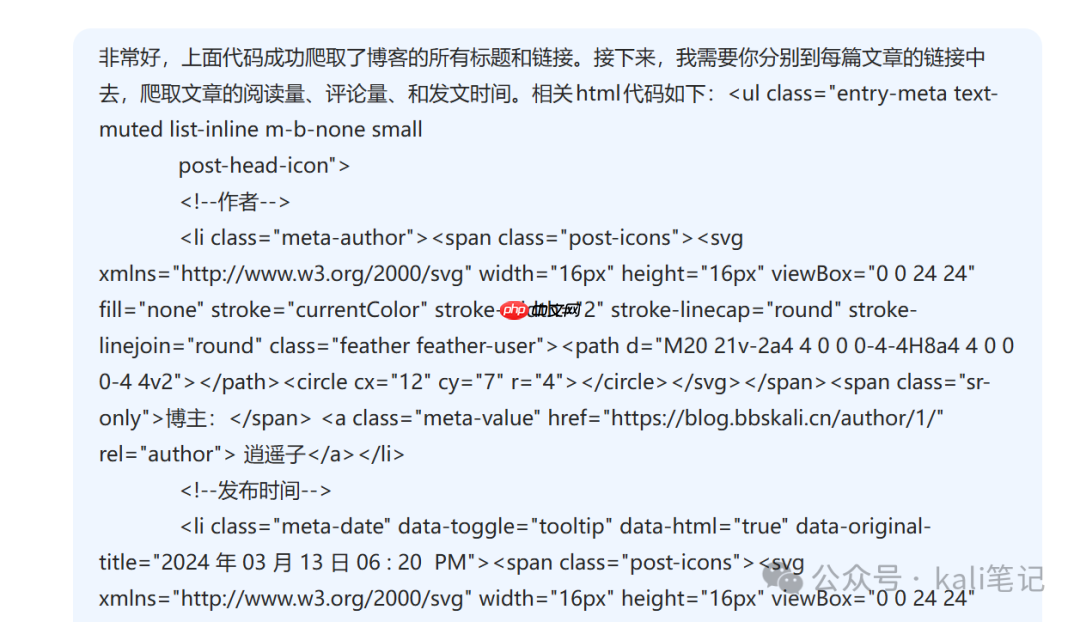

新建对话内容如下:

新建对话内容如下:

#抓取文章阅读量、评论数、发布日期#

很好,之前的代码已经成功获取了博客的所有标题和链接。现在我需要你进入每一篇文章的具体页面,提取其阅读量、评论数和发布时间。相关HTML代码结构如下:

HAPPY TEACHER'S DAY

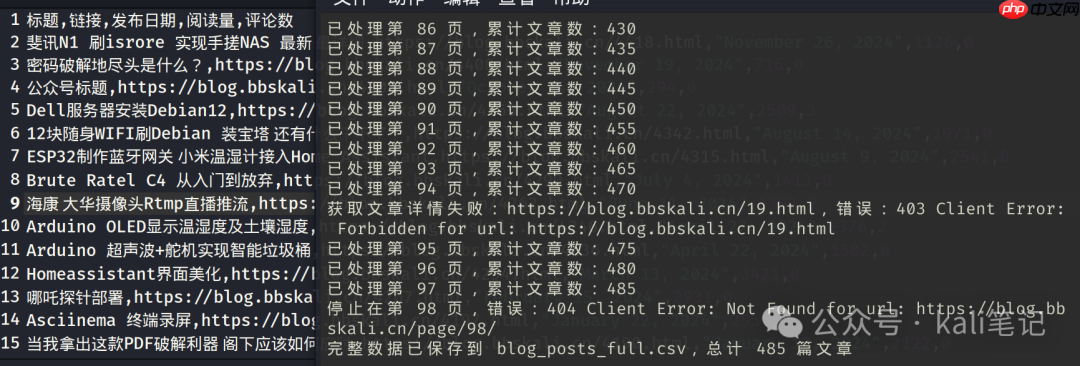

实际运行效果

实际运行效果

完整代码如下

完整代码如下

import requests

from bs4 import BeautifulSoup

import csv

import re

import time

<p>def get_post_details(url, headers):

try:

response = requests.get(url, headers=headers)

response.raise_for_status()

except Exception as e:

print(f"获取文章详情失败:{url},错误:{str(e)}")

return None, None, None</p><p>soup = BeautifulSoup(response.text, 'html.parser')</p><h1>提取发布时间</h1><p>date<em>li = soup.find('li', class</em>='meta-date')

post_date = date_li.find('time').text.strip() if date_li else ''</p><h1>提取阅读量</h1><p>views<em>li = soup.find('li', class</em>='meta-views')

views = 0

if views_li:

views_text = views<em>li.find('span', class</em>='meta-value').text

match = re.search(r'(\d+)', views_text.replace(' ', ' '))

views = match.group(1) if match else 0</p><h1>提取评论数</h1><p>comments<em>li = soup.find('li', class</em>='meta-comments')

comments = 0

if comments_li:

comments_tag = comments<em>li.find('a', class</em>='meta-value') or comments<em>li.find('span', class</em>='meta-value')

if comments_tag:

match = re.search(r'(\d+)', comments_tag.text)

comments = match.group(1) if match else 0</p><p>return post_date, views, comments</p><p>def get_all_posts():

base_url = "<a href="https://www.php.cn/link/2c2c5fd01b61e3e0e687573af8f7e1fa">https://www.php.cn/link/2c2c5fd01b61e3e0e687573af8f7e1fa</a>"

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.3'

}</p><p>all_data = []

page_num = 1</p><p>while True:</p><h1>构造分页链接</h1><pre class="brush:php;toolbar:false;"> url = f"{base_url}/page/{page_num}/" if page_num > 1 else base_url

try:

response = requests.get(url, headers=headers)

response.raise_for_status()

except Exception as e:

print(f"停止在第 {page_num} 页,错误:{str(e)}")

break

soup = BeautifulSoup(response.text, 'html.parser')

posts = soup.find_all('div', class_='post_title_wrapper')

if not posts:

break

for post in posts:

h2_tag = post.find('h2', class_='index-post-title')

if not h2_tag:

continue

a_tag = h2_tag.find('a')

if a_tag and a_tag.has_attr('href'):

title = a_tag.text.strip()

link = a_tag['href']

# 获取文章详细信息

post_date, views, comments = get_post_details(link, headers)

# 汇总数据

all_data.append([

title,

link,

post_date,

views,

comments

])

# 添加请求间隔,避免对服务器造成压力

time.sleep(0.5)

print(f"已处理第 {page_num} 页,累计文章数:{len(all_data)}")

page_num += 1with open('blog_posts_full.csv', 'w', newline='', encoding='utf-8-sig') as f: writer = csv.writer(f) writer.writerow(['标题', '链接', '发布日期', '阅读量', '评论数']) writer.writerows(all_data)

return len(all_data)

if name == "main": count = get_all_posts() print(f"完整数据已保存到 blog_posts_full.csv,总计 {count} 篇文章")

""" 已处理第 1 页,累计文章数:10 已处理第 2 页,累计文章数:20 ... 完整数据已保存到 blog_posts_full.csv,总计 56 篇文章 """

总结:

借助DeepSeek,我们可以轻松生成所需的爬虫代码。当然,前提是我们必须清晰准确地描述需求!

以上就是不会写代码 用DeepSeek实现爬虫的详细内容,更多请关注php中文网其它相关文章!

Copyright 2014-2025 https://www.php.cn/ All Rights Reserved | php.cn | 湘ICP备2023035733号